Sprinkling AI into 3D Animation & CGI Courses

Brief Roadmap for Sprinkling in AI for Different Courses

AI-related pedagogy will, in time, likely be included in some way within all our program courses – either directly or indirectly. I cannot guarantee which courses will include this content in the near future, since that largely depends upon who is assigned to teach the course. But these are examples of courses I had in mind when developing my AI portfolio material:

Introduction to 3D Animation (574-131-DW)

This course could include any/all of the topics within this AI portfolio, time-permitting.

Sculpting Human Anatomy (510-293-DW)

This course is traditionally a hands-on fine arts course, but it presents a good opportunity for topics such as Scott Eaton’s work to be touched upon.

History of Film Production Techniques (530-292-DW)

This course is largely about the history of cinema, but it will surely begin to include lessons about how AI is finding it’s place in the story of how films are made.

3D Animation Techniques (574-232-DW)

This course would be a good place for students to learn more about how AI is being used with motion capture, motion editing, and animation.

Digital Video and Photography (574-241-DW)

The next iteration of this course may include unreal game engine and conversations about how digital photography technology and techniques have already been profoundly affected by AI.

Digital Colours and Textures (574-261-DW)

This is a course that teaches students traditional art fundamentals surrounding colour and texture, as well as the technical aspects of various file formats and compression considerations. The course also teaches students to create procedural materials of any kind, using visual-scripting nodes. The introduction of AI into the node-based workflows, as well as the many implementations of AI in Adobe software, presents opportunities to have sidebar discussions about how AI is driving these tools.

Matte Painting (574-362)

This course would be a good place to touch on AI topics such as Nvidia’s GauGAN Beta project.

Lights Camera and Rendering I and II (574-381-DW and 574-482-DW)

These courses would be a great place to discuss the role AI is taking in speeding up rendering workflows to the point of achieving photoreal results in real time. This could include topis such as Nvidia’s AI-based “super resolution” – rendering fewer pixels and then using AI to construct sharp, higher resolution images

Character Rigging (574-473-DW)

This would be an ideal course for touching on AI topics such as AI autoriggers, AI auto skinning, as well as the problems AI has yet to solve in this area (but eventually will).

Acting For Animation (560-591-DW)

This would be a great course to touch on topics such as deepfakes, and how animators & actors can work with AI to create performances that otherwise would never be possible.

Career Development (574-691-DW)

This course would be a good place to touch on subjects such as how AI automation is affecting entry-level jobs, such as rotoscoping. It would also be a good place to discuss the underlying algorithms behind platforms like Linkedin and Facebook (both of which can be important when job-searching). The course could also be a good opportunity to touch on AI-related areas of concern when job searching (such as topics presented in the film, “Coded Bias”, for example).

What is “AI”?

Learning about AI in 3D & CGI should begin with introductory conversations about what AI is (and what it isn’t!).

There are many great resources online for this, one of which is the AI Demystified (Presentation given by some of the 2019-2020 Dawson AI Fellows during Dawson College’s Pedagogical Day on 15 January, 2020).

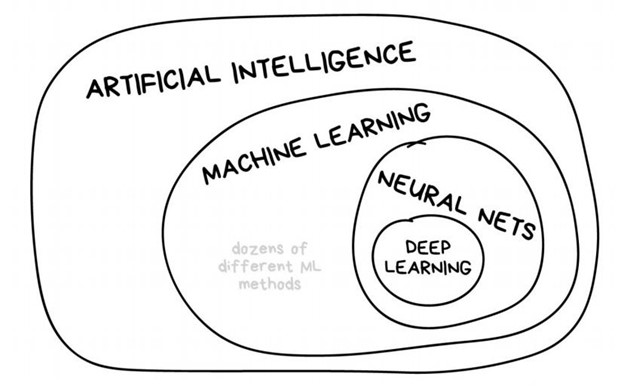

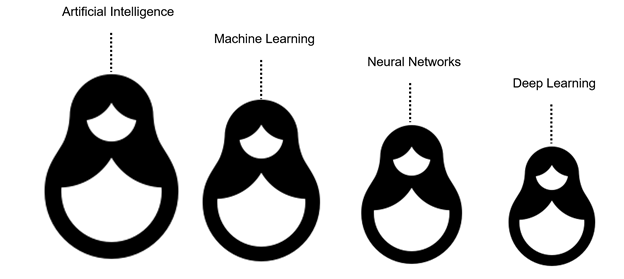

“Artificial Intelligence Explained in Simple English” is another online resource that can help introduce some of the most basic concepts we encounter when discussing AI, Machine Learning, Neural Networks, and Deep learning.

https://medium.com/mytake/artificial-intelligence-explained-in-simple-english-part-1-2-1b28c1f762cf

“Artificial intelligence explained in 2 minutes: What exactly is AI?” is one example of a brief explainer video that can help make sure all students understand the basics of AI concepts before proceeding onto topics involving more specific applications of AI.

https://www.youtube.com/watch?v=UdE-W30oOXo

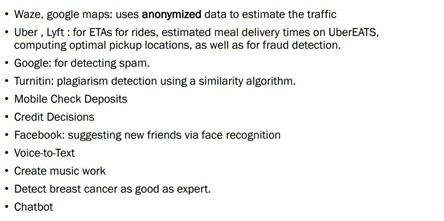

Some Areas Where AI Is Already At Work

The above list comes from the AI Demystified presentation. It helps students understand that AI is already informing many of the tools and realities that they interact with on a daily basis.

Similar lists could be drawn up for tools and apps that are specific to VFX, Games, and 3D Animation (and that list is an evolving work in progress, since the industry changes constantly).

Potential class project:

One interesting assignment would be to have students work together to research some of the many examples of everyday applications of AI and machine learning within the 3D industries, and to present some of these to the class.

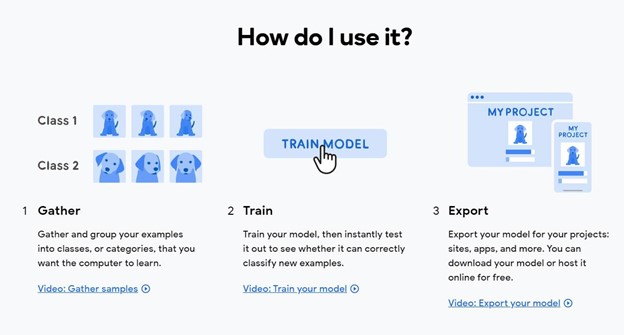

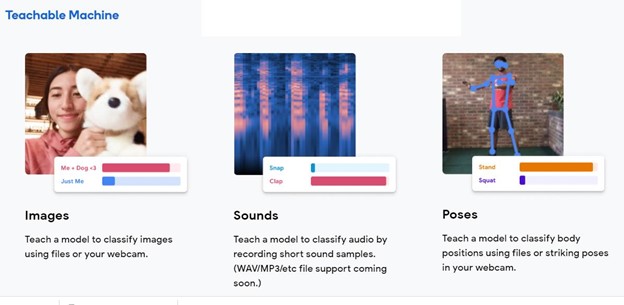

Google’s Teachable Machine

Students’ first experiences of Machine Learning will be more likely to stick if the experiences are fun, visual and interactive.

“Teachable Machine” is a web-based tool that makes creating machine learning models fast, easy, and accessible to everyone.

It’s a great resource for having students create ML models in ways that are fun, visual, and interactive:

https://teachablemachine.withgoogle.com/

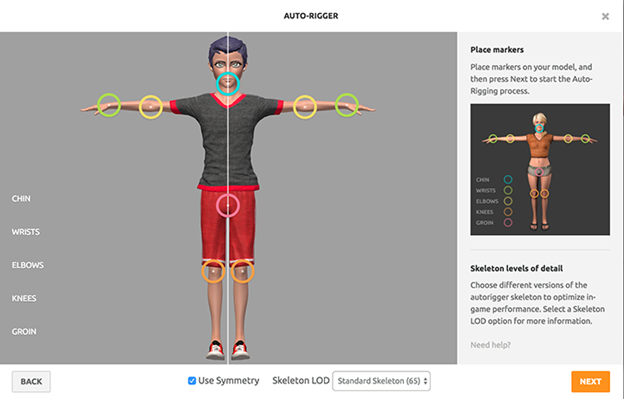

Using AI (Machine Learning) for rigging a 3D character to be used with mocap and/or 3D Animation

Rigging a CG character has traditionally been a time-consuming process requiring specialized technical and artistic knowledge of both anatomy and the computer software tools designed to create the illusion of anatomy.

Mixamo is an example of machine learning being used to automate the process of rigging a bipedal character.

This is an exercise that can be used to introduce the process of rigging a simple character. It can also be used to discuss Machine Learning and the challenges of automating certain processes. The complexity of Mixamo’s resulting rigs is limited, but it does offer a glimpse of what is to come in the next few years:

To rig a character using Mixamo, simply:

- Place the markers on key points (wrists, elbows, knees, and groin) by following the onscreen instructions.

- Confirm your marker placement to continue.

The rigging process will begin once you confirm. It usually takes a few minutes to complete. - You’ll be taken back to the Mixamo interface once the rigging process is complete. You can download your rigged character by selecting the Download button from the editor panel or apply animations by selecting the Find Animations button.

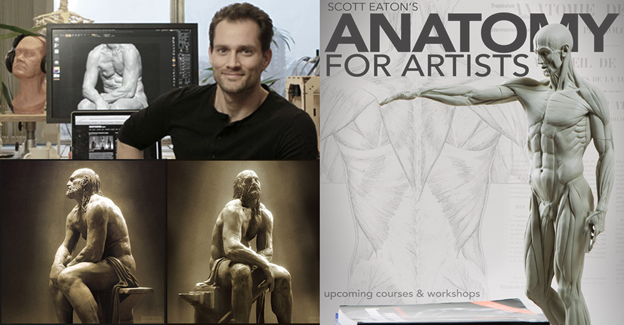

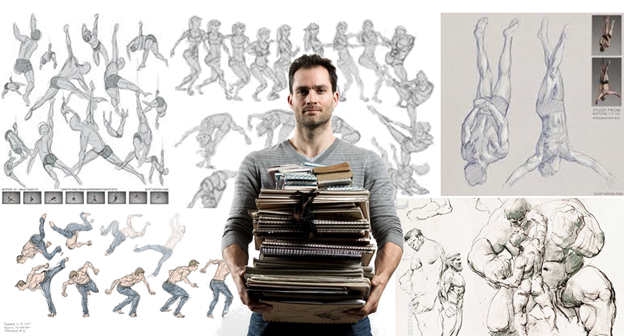

Scott Eaton: Artist+AI: Expanding Creative Horizons

Scott Eaton is a world-class sculptor and one of the most interesting examples of intersecting Art and AI.

Scott’s art and designs have been featured in Wired Magazine, GQ, Vogue, Vanity Fair, the New York Times and shown around the world.

Scott trained to be a classical sculptor in Milan and went on to work on a long list of high-end blockbuster films while based out of London and LA.

He then became one of the world’s leaders in teaching anatomy for artists (and digital sculpting), offering both in-person and online classes.

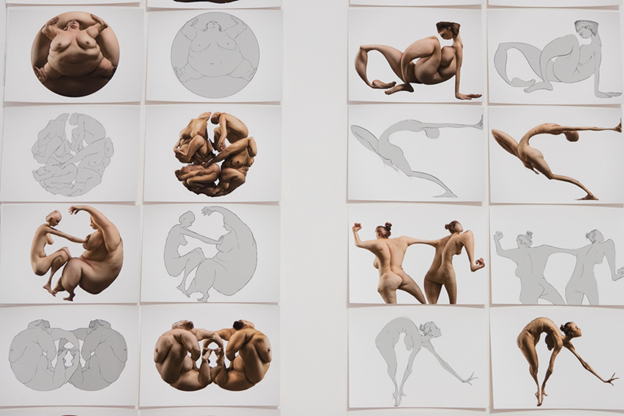

Scott Eaton Mixes Art & AI

In addition to being a world class sculptor, Scott has also studied engineering and has a Master’s degree from the MIT media lab.

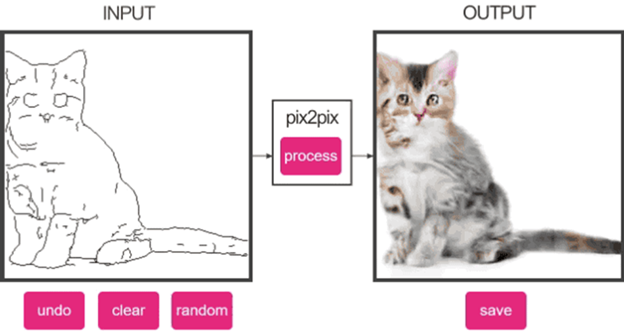

When Scott first played with edges2cats, he immediately thought it was really cool technology. It wasn’t long before he felt that if neural networks could be trained to render photoreal cats from simple line drawings, what would happen if he trained the networks using his library of photographs featuring bodies in motion?

This led to three years of ongoing experiments training the Neural networks.

He has had several major exhibits of this work, he’s been featured in a webinar by Nvidia, he is routinely invited to conferences around the world to present his work, and he was recently invited to give a seminar on “Artist and AI” to a group of researchers at Oxford University.

He even collaborated on a giant piece of art with Jeff Kloos, for a Lady Gaga concert series.

Scott Eaton Links:

Scott Eaton’s Dawson Talk:

In the Winter 2021 semester, Scott Eaton gave a 1.5 hour presentation for a Dawson.AI’s initiative. The talk was entitled, “Artist+AI: Expanding Creative Horizons” and can be accessed on Dawson’s internal sharepoint site here: http://bit.ly/DawsonAIStream

Scott Eaton’s Nvidia Webinar Presentation

“ARTISTS+AI: FIGURES, FORM AND OTHER FANTASTICAL EXPERIMENTS IN DEEP LEARNING” can be viewed here: https://info.nvidia.com/scott-eaton-reg-page.html?ondemandrgt=yes

Scott Eaton’s Website: http://www.scott-eaton.com/

Pix2pix, Edges2cats, Edges2photo

Class Project:

To help students understand and appreciate the work Scott Eaton is creating (and also to expose them to the source of inspiration that sparked his imagination), one interesting class project might entail playing with Pix2Pix, edges2cats, and edges2photo.

This could lead to interesting conversations about conditional adversarial networks.

A more advanced project would be to have the students train their own model using a unique photo dataset of their own design, and then have their classmates interact with it.

VFX Automation Using Machine Learning

Many of the jobs that have traditionally been regarded as entry-level positions within the VFX and CG animation industries will be amongst the first areas to be affected by AI automation. One example of this is the work of rotoscoping.

Rotobot OpenFX is a Plugin that uses Machine Learning to automate the process of Visual Effects Rotoscoping. Rotobot is able to isolate instances of pixels that belong to ‘semantic’ classes of objects such as people, cars, etc. By analysing an image with a recurrent convolutional neural network (CNN), the pixels that belong to one of 81 categories can be isolated.

FXGuide write-up:

https://www.fxguide.com/quicktakes/rotobot-bringing-machine-learning-to-roto/

Rotobot for Nuke tutorial:

For anyone teaching compositing techniques, an introductory demonstration of Rotobot could serve as a springboard for discussing the growing role of AI in automating various processes within VFX, and the technical aspects of how it works.

“Copycat” inference Machine Learning in VFX Compositing

Nuke software has a new feature called “copycat” which allows a user to train a network to replicate effects/processes that the user has manually created elsewhere. This allows for the easy automation of many time-consuming, redundant processes.

https://www.fxguide.com/fxfeatured/copycat-inference-machine-learning-in-nuke/

Synthetic datasets for computer vision training, using Unity3D

Many real-world applications will rely on Computer Vision and Machine Learning. Training the computer vision models is limited by the data sets available. Generating massive, custom, diverse, synthetic datasets to train the models intends to offer greater control and predictable results.

This can be eye-opening, mind-expanding, and can lead to interesting conversations about the fact that models are only as good as the data sets that are used to train them.

https://unity.com/products/computer-vision

Deep Fakes, De-Ageing Actors, CG Avatars, & Metahumans

No conversation about AI and VFX/CG would be complete without touching on the cutting edge world of deep fakes, both from a technical standpoint as well as the ethics and the implications on our notions of truth and history.

There are many mobile apps now (such as “reface”) making deepfake clips accessible to all, for entertainment purposes. These would make it easy to have students create deepfakes.

While these can be a fun and easy way to learn about deep fakes, it is also worth introducing these only within the context of warnings about the many ways deep fakes can be used for more nefarious or manipulative purposes.

Fake Tom Cruise:

https://www.fxguide.com/fxfeatured/fake-tom-cruise/

Using Machine Learning to De-Age Actors:

https://www.fxguide.com/fxfeatured/machine-learning-in-flame/

Digital Domain has been a world leader for using machine learning to create real-time believable digital avatars of humans:

https://www.youtube.com/watch?v=fMwq9Xa2v2Y

Unreal Engine has released the first edition of the free Metahuman creator, which makes the creation of photoreal 3D puppets accessible to all:

https://www.youtube.com/watch?v=S3F1vZYpH8c

AI threatens history itself

Deepfake technology is still in its early stages. And right now, detection is relatively easy, because many deepfakes feel “off.” But as techniques improve, it’s not a stretch to expect that amateur-produced AI-generated or -augmented content will soon be able to fool both human and machine detection in the realms of audio, video, photography, music, and even written text. At that point, anyone with a desktop PC and the right software will be able to create new media artifacts that present any reality they want, including clips that appear to have been generated in earlier eras.

https://www.fastcompany.com/90549441/how-to-prevent-deepfakes

Additional Resources

Siggraph is a fantastic resource when researching cutting edge topics in CGI and AI:

https://blog.siggraph.org/tag/artificial-intelligence/

FXguide is a fantastic resource when researching cutting edge topics in VFX and AI

https://www.fxguide.com/articles/

Guide to Implications of AI on VFX

This is a great resource for teachers looking to gain a quick overview on AI in VFX:

Here is a more recent article by the same author:

https://rossdawson.com/futurist/implications-of-ai/future-of-ai-image-synthesis/

Each of the above links contains topics that could become springboards for entire course lessons.

For those who are comfortable with Python:

All Machine/Data Learning algorithms generally follow the same pipeline:

Data extraction, cleaning, model training, evaluation, model selection, deployment

This is one example of deriving data for Animation, using maxamo data stored in .fbx file format:

https://towardsdatascience.com/data-extraction-b9ac0cb645b6

Resources for those who wish to learn Python &/or Machine Learning:

A few learning resources:

- Korbit (a Dawson College partner)

- https://www.python.org/

- https://learnpythonthehardway.org/python3/

- https://realpython.com/

- Coursera

- Udemy

- linkedinLearning

- “Brilliant” Mobile App

Further Reading & Food for Thought

The Age of Surveillance Capitalism

“The Age of Surveillance Capitalism” book by Harvard Professor Shashanna Zuboff:

“The Age of Surveillance Capitalism” book by Harvard Professor Shashanna Zuboff:

https://shoshanazuboff.com/book/about/

“…we need public education, democratic mobilization, and political leadership. We need the creativity and courage to revitalize our frameworks of human rights, laws, and regulations for a new epoch. This next decade is pivotal. It’s all hands on deck.”

Articles by Shashanna Zuboff: https://shoshanazuboff.com/book/recent-work/

Videos featuring Shashanna Zuboff: https://shoshanazuboff.com/book/films-tv/

Podcasts featuring Shashanna Zuboff: https://shoshanazuboff.com/book/podcasts/

Who Owns the Future?

Jaron Lanier is an American computer philosophy writer, computer scientist, visual artist, and composer of contemporary classical music. He is considered a founder of the field of virtual reality (VR). In 2010, Lanier was named to the TIME 100 list of most influential people.

Jaron Lanier is an American computer philosophy writer, computer scientist, visual artist, and composer of contemporary classical music. He is considered a founder of the field of virtual reality (VR). In 2010, Lanier was named to the TIME 100 list of most influential people.

While some of his ideas may be considered controversial, they are all excellent topics for discussion.

“Who Owns The Future?” by Jaron Lanier

“Ten Arguments for Deleting your Social Media Accounts Right Now” by Jaron Lanier

“Ten Arguments for Deleting your Social Media Accounts Right Now” by Jaron Lanier

Jaron Lanier’s website, listing all the books he has written: http://www.jaronlanier.com/

Land of the Giants

Land of the Giants podcast (This simply scratches the surface to understand the story of tech giants Google, Amazon, and Netflix): https://podcasts.apple.com/us/podcast/land-of-the-giants/id1465767420

Land of the Giants podcast (This simply scratches the surface to understand the story of tech giants Google, Amazon, and Netflix): https://podcasts.apple.com/us/podcast/land-of-the-giants/id1465767420

Facebook. Apple. Amazon. Netflix. Google. These five tech giants have changed the world. But how? And at what cost? Google’s dominance in everything from search and online advertising to YouTube and Android gives it tremendous power and responsibility. In season three, The Google Empire, Recode’s Shirin Ghaffary and Big Technology’s Alex Kantrowitz explore how Google became one of the most powerful companies in the world and whether it has become too big for our own good.

Coded Bias

To better understand the relationship between Machine Learning and the (sometimes unintentional) effect data sets can have on creating various ML models: https://www.youtube.com/watch?v=jZl55PsfZJQ

Dawson.AI Presentations that I Have Been Involved With

- “Can Essays Written by AI Systems Be the Next Great Cheat Code?” (Presentation given during Dawson College’s Pedagogical Day on October 14, 2020)

- I invited Scott Eaton to deliver a presentation for our students entitled, “Artist+AI: Expanding Creative Horizons”. The webinar is available to the Dawson community via Dawson’s internal SharePoint site and can be found here: http://bit.ly/DawsonAIStream